I am running a one-way simulation in Spain with a 5 km domain and an inner domain with 1 km resolution. The bigger domain worked fine and wrfout files seem correct.

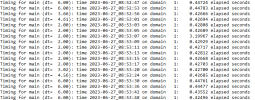

However, after running ndown and so on, my wrfout files from the inner domain are totally empty (except for the first one).

I am using adaptive time step, and have tried to lower the min_time_step, but nothing seems to change after running wrf.exe again (but not real.exe, or do I need to run it again too?). I have attached error and namelist files for both domains in case there is any inconsistency. I can't attach wrfbdy file because it's too large.

Could it be related to d02 size or position with respect to d01? For example because there is not enough space between both boundaries.

Hope someone can help,

thank you.

However, after running ndown and so on, my wrfout files from the inner domain are totally empty (except for the first one).

I am using adaptive time step, and have tried to lower the min_time_step, but nothing seems to change after running wrf.exe again (but not real.exe, or do I need to run it again too?). I have attached error and namelist files for both domains in case there is any inconsistency. I can't attach wrfbdy file because it's too large.

Could it be related to d02 size or position with respect to d01? For example because there is not enough space between both boundaries.

Hope someone can help,

thank you.

Attachments

Last edited: