Hi,

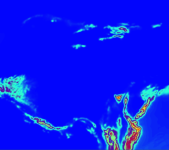

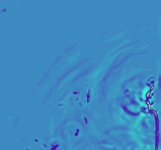

I would like to do a high resolution (2-3km) forecast for the Sierra's, nesting down from GFS->~9km->~3km. Because the Sierra's are so large it is not possible for me to place the inner domain border entirely away from mountainous terrain, it has to cross somewhere, and it's always on this border I am getting crashes.

Is there some way to smooth the area near the nest border more without smoothing the whole inner domain's terrain? The area of interest is a long way from the border so I think it should be out of the area of influence. Is there an option like smooth_cg_topo for nest borders?

Otherwise, can I go direct to 2-3 km from GFS?

I would like to do a high resolution (2-3km) forecast for the Sierra's, nesting down from GFS->~9km->~3km. Because the Sierra's are so large it is not possible for me to place the inner domain border entirely away from mountainous terrain, it has to cross somewhere, and it's always on this border I am getting crashes.

Is there some way to smooth the area near the nest border more without smoothing the whole inner domain's terrain? The area of interest is a long way from the border so I think it should be out of the area of influence. Is there an option like smooth_cg_topo for nest borders?

Otherwise, can I go direct to 2-3 km from GFS?