Hello,

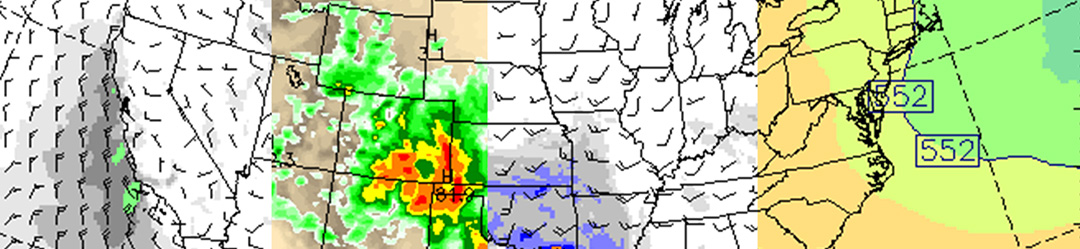

I have a problem with simulation crashes in WRF version 4.4.2 with SLUCM, which typically occurs in summer. The only relevant output in rsl files is exceedence of vertical cfl condition in some grid-points, but neither decrease of time-step nor epssm=0.4 solves the problem. When I investigated more, I noticed that TSK array is somehow strange in the last hour before crash - in some urban areas are temperatures higher than 340 K (!) - see the attached figure (domain in central Europe). Also related arrays as sensible or latent heat fluxes are affected to unrealistic values.

The same occurs in version 4.5.0, the last version that run without errors (and with realistic TSK) is 4.3.3. Namelist with setting is attached.

Have somebody similar experience or does somebody know how to fix it?

Thanks, Jan

I have a problem with simulation crashes in WRF version 4.4.2 with SLUCM, which typically occurs in summer. The only relevant output in rsl files is exceedence of vertical cfl condition in some grid-points, but neither decrease of time-step nor epssm=0.4 solves the problem. When I investigated more, I noticed that TSK array is somehow strange in the last hour before crash - in some urban areas are temperatures higher than 340 K (!) - see the attached figure (domain in central Europe). Also related arrays as sensible or latent heat fluxes are affected to unrealistic values.

The same occurs in version 4.5.0, the last version that run without errors (and with realistic TSK) is 4.3.3. Namelist with setting is attached.

Have somebody similar experience or does somebody know how to fix it?

Thanks, Jan